How are the targets calculated? The target outcome for each case in the data depends on how much changing that case's prediction impacts the overall prediction error: The key idea is to set the target outcomes for this next model in order to minimize the error.

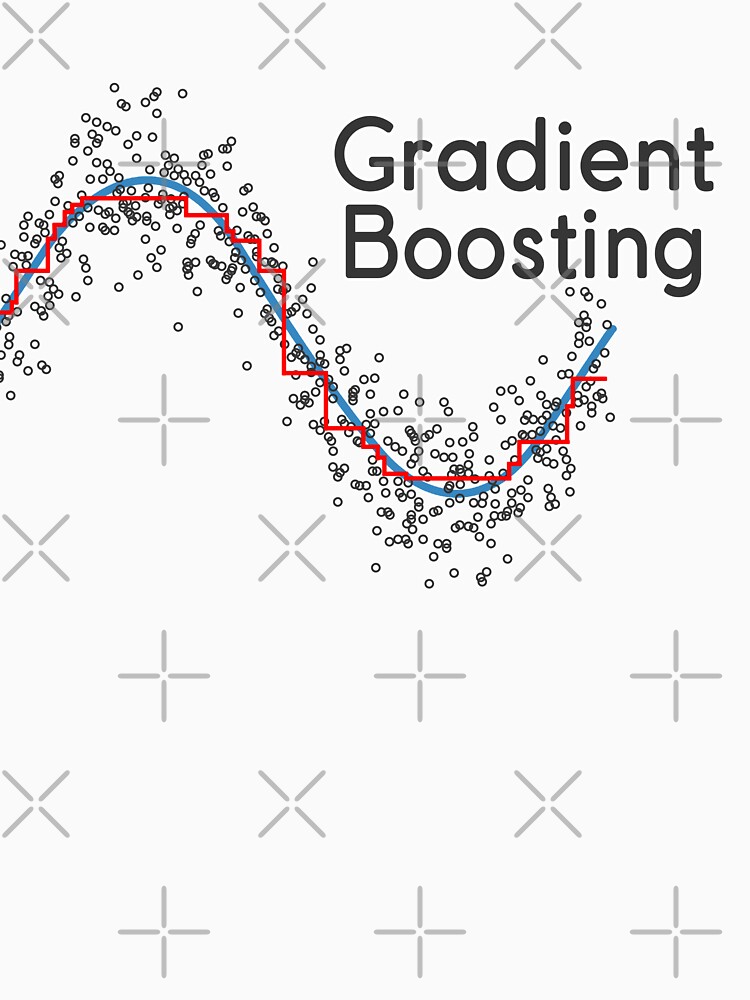

It relies on the intuition that the best possible next model, when combined with previous models, minimizes the overall prediction error. Gradient boosting is a type of machine learning boosting.

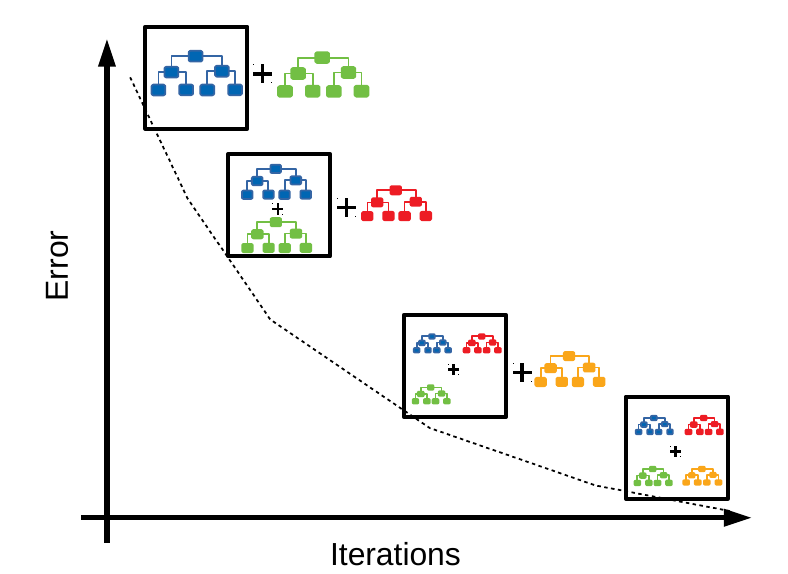

Each successive model attempts to correct for the shortcomings of the combined boosted ensemble of all previous models. Then you repeat this process of boosting many times. The combination of these two models is expected to be better than either model alone. Then a second model is built that focuses on accurately predicting the cases where the first model performs poorly. a tree or linear regression) to the data. It starts by fitting an initial model (e.g. Machine learning boosting is a method for creating an ensemble. An ensemble is a combination of simple individual models that together create a more powerful new model. Machine learning models can be fitted to data individually, or combined in an ensemble. Try Gradient Boosting! Ensembles and boosting

#Gradient boosting how to

In this blog post I describe what is gradient boosting and how to use gradient boosting. From data science competitions to machine learning solutions for business, gradient boosting has produced best-in-class results. Gradient boosting is a technique attracting attention for its prediction speed and accuracy, especially with large and complex data.ĭon't just take my word for it, the chart below shows the rapid growth of Google searches for xgboost (the most popular gradient boosting R package).